I’m not going to mention the drama at OpenAI. Instead I want to talk about something more fun, like regulations.

Last week both California and Florida unveiled their proposed regulatory opinions for lawyers using AI. Neither state has exactly been on what I would consider the Side of Righteousness as far as lawyer protectionism goes. California effectively strangled any efforts to explore whether non-lawyers could help people last year. Florida, well … ha ha, Florida effectively nuked any prospect of alternative legal service providers from orbit when it took the TIKD case to the FL Supreme Court and won (spurred on by the lawyers whose business model was threatened by TIKD). Still feeling threatened, Florida then salted what little fertile ground remained by rejecting a proposed regulatory sandbox in terms that made it clear innovation was not welcome. So you can understand my trepidation in learning of their efforts to regulate AI. I’m a dues-paying member of the Florida Bar, and have to grit my teeth every year when I pay them.

Reading through the proposals, though, it’s pretty clear to me that California got this one largely right (at least so far), and Florida seems very worried about a website chatbot.

California’s proposal:

You can read the full proposal here at this link. I’m going to highlight some of the portions I think are important below.

What’s notable about California’s proposal is this:

[The committee believes] that the existing Rules of Professional Conduct are robust, and the standards of conduct cover the landscape of issues presented by generative AI in its current forms.

Basically, when given the regulatory hammer, they looked around and found that the existing structure didn’t need any more nails.

California then went on to say that what’s needed is practical guidance for lawyers, including lawyer education. For the practical guidance, they cite to the MIT Task Force on Responsible Use of Generative AI for Law (disclaimer: I was a contributor to this), and use what the task force came up with in molding their own to the California Rules of Professional Conduct.

Additionally, California sets up requirements for addressing law student education on generative AI, which as far as I know is lacking in most law schools, along with instruction on basic technology competence.

What’s most remarkable about California’s proposal is that they said this:

Generative AI products are being developed for a multitude of uses and for a variety of professions. They are also being developed to provide legal assistance to unrepresented persons. While generative AI may be of great benefit in minimizing the justice gap, it could also create harm if self-represented individuals are relying on generative AI outputs that provide false information.

They then provide two recommendations for the Board of Trustees:

Work with the Legislature and the California Supreme Court to determine whether the unauthorized practice of law should be more clearly defined or articulated through statutory or rule changes; and1

Work with the Legislature to determine whether legal generative AI products should be licensed or regulated and, if so, how.

If you’re one of the three avid readers of this newsletter (one is my mom), you’ll probably remember that I’ve harped on and on about the ambiguity around what is and isn’t the practice of law, especially when it comes to “legal advice” vs. “legal information.” I don’t think the folks in CA are readers of mine, but it’s encouraging to see that they recognize the problem.

Now, granted, we could get a worst case scenario out of this, where California basically tries to regulate AI into the ground because there’s not a clear cut way to prevent it from giving “legal advice” (whatever that means). But I’m choosing to be optimistic.

Florida’s proposal:

Florida’s proposal for regulating AI is somewhat similar to California’s, in that they spend a lot of time talking about confidentiality and competence, but Florida kind of takes a left turn when it comes to the “duty to supervise.”

While Rule 4-5.3(a) defines a nonlawyer assistant as a “a person,” many of the standards applicable to nonlawyer assistants provide useful guidance for a lawyer’s use of generative AI.

Florida then goes on to analogize, or really anthropomorphize, AI into the role of a legal assistant that must be supervised (in that their work product has to be reviewed). All well and good - it’s easy for people to anthropomorphize this technology, and here it’s probably a useful frame of reference.

But a short distance later, the opinion really digresses into website chatbots:

Further, a lawyer should carefully consider what functions may ethically be delegated to generative AI. Existing ethics opinions have identified tasks that a lawyer may or may not delegate to nonlawyer assistants and are instructive. First and foremost, a lawyer may not delegate to generative AI any act that could constitute the practice of law such as the negotiation of claims or any other function that requires a lawyer’s personal judgment and participation.

Florida Ethics Opinion 88-6 notes that, while nonlawyers may conduct the initial interview with a prospective client, they must:

Clearly identify their nonlawyer status to the prospective client;

Limit questions to the purpose of obtaining factual information from the prospective client; and

Not offer any legal advice concerning the prospective client’s matter or the representation agreement and refer any legal questions back to the lawyer.

This guidance is especially useful as law firms increasingly utilize website chatbots for client intake. While generative AI may make these interactions seem more personable, it presents additional risks, including that a prospective client relationship or even a lawyer-client relationship has been created without the lawyer’s knowledge.

The Comment to Rule 4-1.18 (Duties to Prospective Client) explains what constitutes a consultation:

A person becomes a prospective client by consulting with a lawyer about the possibility of forming a client-lawyer relationship with respect to a matter. Whether communications, including written, oral, or electronic communications, constitute a consultation depends on the circumstances. For example, a consultation is likely to have occurred if a lawyer, either in person or through the lawyer’s advertising in any medium, specifically requests or invites the submission of information about a potential representation without clear and reasonably understandable warnings and cautionary statements that limit the lawyer’s obligations, and a person provides information in response. In contrast, a consultation does not occur if a person provides information to a lawyer in response to advertising that merely describes the lawyer’s education, experience, areas of practice, and contact information, or provides legal information of general interest. A person who communicates information unilaterally to a lawyer, without any reasonable expectation that the lawyer is willing to discuss the possibility of forming a client-lawyer relationship, is not a “prospective client” within the meaning of subdivision (a).

Similarly, the existence of a lawyer-client relationship traditionally depends on the subjective reasonable belief of the client regardless of the lawyer’s intent. Bartholomew v. Bartholomew, 611 So. 2d 85, 86 (Fla. 2d DCA 1992).

For these reasons, a lawyer should be wary of utilizing an overly welcoming generative AI chatbot that may provide legal advice, fail to immediately identify itself as a chatbot, or fail to include clear and reasonably understandable disclaimers limiting the lawyer’s obligations.

I’m sorry for quoting that in full there, but it’s kind of odd that they devote that much space to discussing whether or not a person will suddenly think they’re being legally represented by a website chatbot. This could have easily been handled by saying something like “lawyers must affirmatively disclose the use of generative AI in any client-facing technology, such as a chatbot.” Instead we get this really verbose yet vague language, without any real guidance on how to convince a website visitor that an AI chatbot isn’t a lawyer. What we’ll probably end up with something like this:

[ChatBot] Thank you for visiting our website! I’m Bartleby, a friendly chatbot here to help you! Can I answer a question?

[Person] do you take petty theft cases

[ChatBot] Before I answer your question I must disclose that I am a robot and not a human being! Please answer yes or no that you understand!

[Person] yes

[ChatBot] Great! Also I must disclose that I cannot give legal advice! Do you understand! Please answer yes or no!

[Person] yes

[ChatBot] Great! Also please be aware that I cannot create an attorney-client relationship with you, or between you and Dewy, Cheatum, & Howe PLLC. Please indicate yes or no! Yes means that you understand that by using this chatbot you do not expect the creation of an attorney-client relationship with the afore-mentioned law firm, its individual lawyers, or any subsidiary of same! No means that you do not understand!

[Person] (leaves website)

I’m honestly not sure that any lawyer in their right mind would put a chatbot on their website if those are the hoops it has to jump through just to answer a logistical question like “what hours are you open tomorrow.” What’ll probably happen is that lawyers will go on competitors’ websites to try and trick their chatbots into saying that they’re lawyers, and then filing bar complaints. It’s turtles all the way down.

Interestingly, Florida doesn’t recommend any kind of education for either lawyers or law students, although you could probably put together a 2-hr CLE on those chatbot vagaries pretty easily.

What really concerns me about Florida’s proposal is the glaring implication that they believe an AI is capable of “the practice of law,” but not saying anything more. This could lead to the worst-case scenario I mentioned before, where the Florida Bar puts on its “I can regulate everything” pants and tries to apply a nebulous case-by-case evaluation framework to AI.

Can you imagine a world where the Florida Bar gets to review every AI-person interaction that occurs within state lines because the AI may or may not have crossed into forbidden territory? I can.

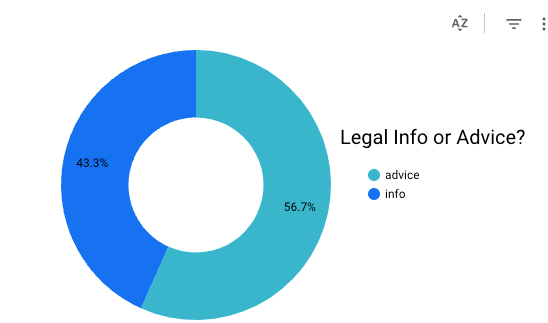

What Florida should’ve done is followed California’s lead and called for the “practice of law” to be more clearly defined. One interesting conclusion from my survey on AI answering legal questions is that just the presence of a disclaimer reduced the number of answers that people thought were “legal advice” dramatically:

No Disclaimer / Inadequate Disclaimer:

Adequate Disclaimer:

So maybe offer some practical guidance, such as “AI companies should strive to have their models offer an adequate disclaimer when the model is asked a legal question. While we cannot provide set language for a disclaimer, here are the essential elements of one: that this is not legal advice, and for legal help the person should contact a lawyer or legal organization.”

Conclusion:

So it seems, at least from my reading, that California is actually concerned with moving the ball forward here, while Florida is more concerned with website chatbots. There’s a whole section in both proposals having to do with how lawyers charge for time, which I found interesting but didn’t have the attention span to get to here. But no matter what, the next few months will be interesting.

Any typos you find are direct evidence of complete moral failure (on your part).

Emphasis mine