Tell me if this sounds familiar:

Scenario 1: Around 38% of people say they’re somewhat or very likely to consider switching to a new and exciting version of a technology that they use every day. But so far, only about 8% have actually done so.

Scenario 2: 47% of people say a new technology is going to transform what they do every day, but only somewhere between 12-25% have actually purchased or invested in that technology.

Scenario 1, if you didn’t already know, has to do with electric cars.

Scenario 2, and you probably guessed this by now, has to do with lawyers and generative AI.

It’s really easy to opine in favor of something new. It’s really hard to actually go out and buy that new thing, especially if what you’re using now works fine and is affordable. Talk, as they say, is cheap.

Take electric cars, for example: Pretty much anyone who has ridden in a Tesla, Rivian, Mustang Mach-E, F150 Lightning, (ok maybe not a Chevy Volt) and has said some variety of “Wow this is definitely the next big thing.” One of my family members has a Rivian R1T and riding in that thing is like being inside the iPhone of cars when everyone else is riding around in a 2007 Nokia 1100.

But only 8-ish% of people actually own an electric car or truck. Why? Because they’re incredibly expensive and horribly inconvenient. They’re roughly double the price of a similarly-equipped gas guzzler, and the public charging infrastructure is a clown show happening in a dumpster fire.1

Talk to any timecard-punching lawyer about generative AI, and they’ll probably tell you they’ve heard of it, and that AI is definitely the Next Big Thing. But very few, if any, will actually be using it day-to-day. Obviously a $20/month subscription to ChatGPT Plus is different than spending $85,000 on a mid-size pickup comparable to one that costs $40,000, but I think that the AI maximalists on LinkedIn underestimate the inconvenience and cost of breaking in a new tool.

Switching costs, lock-in, and lawyers:

In technology there are concepts called “switching costs” and “lock-in.” Switching costs are essentially the hidden costs of switching from product A to product B. Even if product B is newer, cheaper, and does more cool stuff better than product A, the switching cost can be prohibitively expensive, because of the time required to move everything, to train staff on how to use product B, to manage the switch, to do data migration, and so forth. This leads to lock-in, where even though product B is cheaper, faster, and better, the costs of switching are just to dang high to justify it.

With electric cars, the costs are still prohibitively high even though the cars themselves are objectively better. There are still switching costs of making sure you can plug it in at home, figuring out if you can charge it when you’re away from home, and whether or not you can even take the kids in it to grandma’s house if it’s more than 300 miles away.

With AI, although the upfront costs are low, there’s still significant switching costs. A lot of what most lawyers do is either rote form work, or work that requires a lot of very thoughtful analysis. Neither area is a good fit for generative AI: you don’t want inventiveness when it comes to creating a standard Notice of Appearance or a plea deal, you need something that fills in blanks. Neither do you want to spend all your time proofreading a rough draft when you should be spending your attention actually doing a thoughtful analysis.

I think a lot of lawyers are very intrigued by AI, but are (correctly) put off by the switching costs. There’s been a lot of New Legal AI Products out on the market, and a lot of incentive to look innovative by creating one. But I’d bet in the next few months we’ll see some articles talking about how the AI hype has fizzled out, and maybe one of the four people who read this (five if you count my mom) will be able to say “you know, I bet the reason is the high switching costs.” Remember - the LinkedIn Legal AI Influencers may do a lot of cool posting, but they ain’t buying shit.

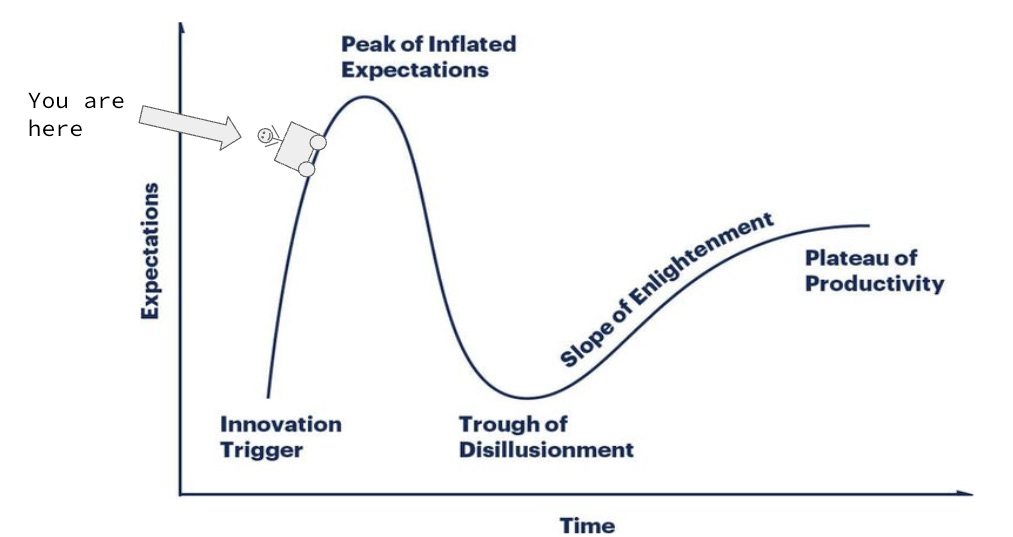

The trough of disillusionment:

After a lot of time spent working in whatever legal technology is (field, industry, profession, mutual admiration society), my thinking has come down to this:

It’s ok to be impressed by new technology;

It’s good to experiment with new technology and see what it actually does (and doesn’t do);

It’s better think critically about new technology and not succumb to the hype guys; and

It’s best to think about new technology as if you’re in the already trough of disillusionment.

Here’s what I mean by that last point.

In the Gartner Hype Cycle, the through of disillusionment is where the hype cycle goes to die, and where people actually start to figure out what something is good for. That’s where you try and understand how the puzzle pieces actually fit together into something people will actually pay for, not just what they say they want.

A year ago tomorrow I wrote a dumb post on here about using ChatGPT for the first time, and how it has potential in the legal field. Over the last 365ishhh days I’ve been amazed at what the new AI models can do, and I really believe they can be transformative. I will tell you that I have barely resisted staying up all night coding out some new solution to some pressing issue I made up in my head like I was in a montage from Halt and Catch Fire. But maybe the ways that AI will transmorgify law practice and legal help haven’t yet been fully fleshed out (or are so mundane as to be dismissed by the AI Thinkfluencers). Unfortunately that line of thinking doesn’t land seed capital.

I’ve tried to live in that disillusionment trough over the past year, not because I’m a jerk and a skeptic (although I am both of those things), but because I really want to try and understand how AI can be actually useful in helping people access justice. And I still don’t have anything to sell you.

And don’t come at me with that “oh well you should just charge at home” bullshit. What about people in apartments or in cities? What about if I want to drive somewhere outside of a 150-mile radius?