One of the things that I’m increasingly tired of hearing is the “lawyers who don’t use Generative AI will be replaced / become obsolete / something bad” trope. Usually I see this kind of thing in a LinkedIn post by a vendor selling some kind of “legal AI” tool.

Honestly I’m very very curious to know what lawyers who use AI are actually using it for (besides replacing non-AI-using lawyers), and so a recent paper from Colleen V. Chien and Miriam Kim at Berkley caught my eye. Basically, the researchers gave legal aid orgs free access to off-the-shelf AI tools, like ChatGPT Plus, CoCounsel, Claude, Gavel, etc. and recorded how the tools were used. It’s very interesting reading.

The big takeaway for me here is the stickiness of a general-purpose tool over ones that are law-practice-specific:

Looking at the table of data they collected provides some insights into why this may be - tasks like non-legal and legal writing and brainstorming were high in number, whereas pure legal research seemed less frequent.

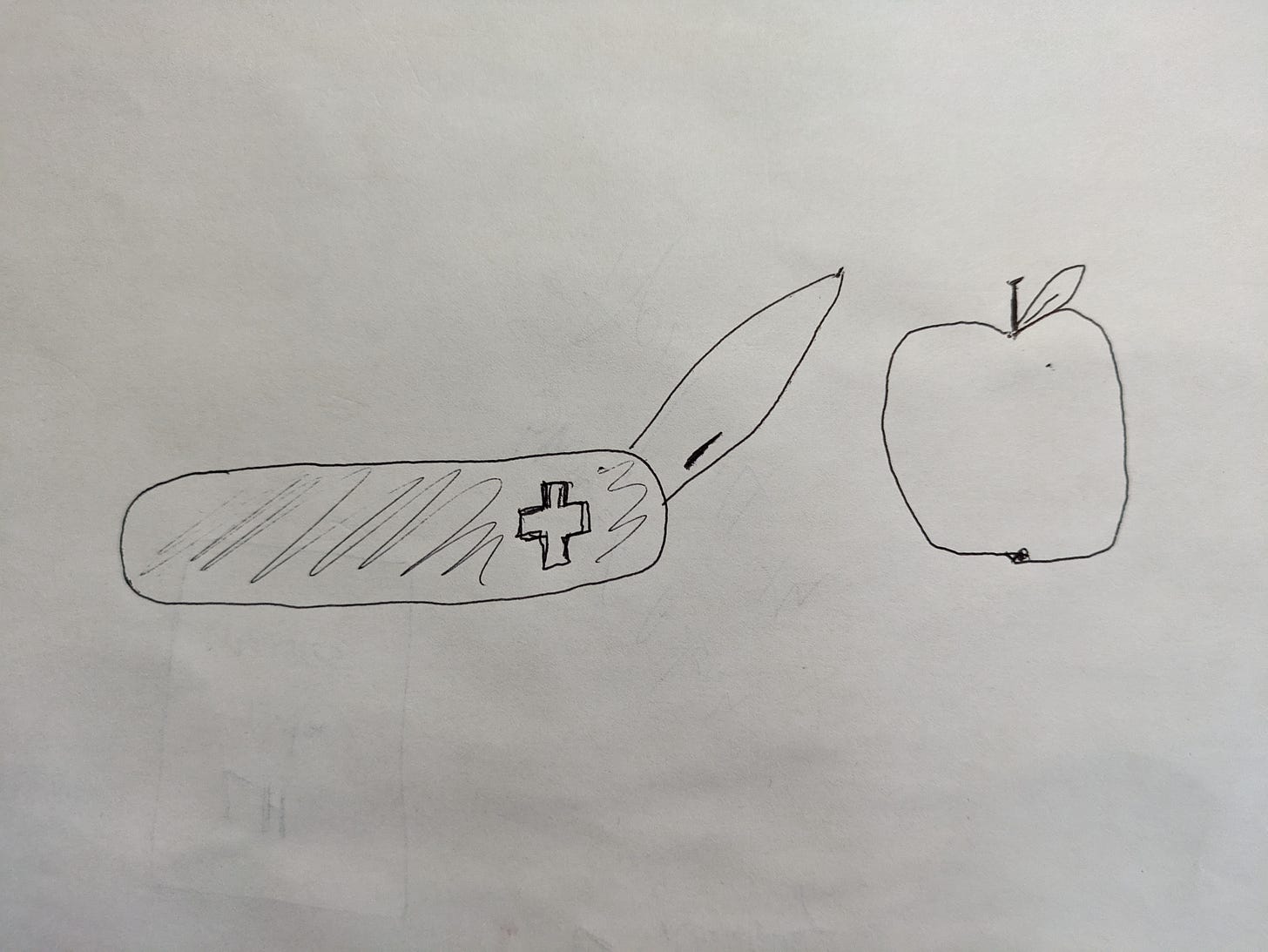

Yes, ChatGPT Plus isn’t “built by lawyers, for lawyers,” no, it isn’t a legal-domain-specific tool. But if you’re out there trying to create the next big AI for lawyers tool, I think you need to take a hard look at whether whatever you’re building is going to be better than ChatGPT. Oftentimes a Swiss Army Knife cuts an apple just as well as a custom-made apple cutting device.

The year of finding out:

I tend to think that 2024 will be the year where we maybe hit the “trough of disillusionment” in the Gartner Hype Cycle with AI. On the F-A-F-O timeline, this can be thought of as the “finding out” period.

A good quote from Garbage Day:

My theory is that we’re beginning to hit a wall with generative AI. It’s not that it isn’t getting better — it is — but we’re getting a clearer understanding of what it can and can’t do. And the reality is that AI is sort of boring. Which is fine, of course, but I don’t think AI companies and AI accelerationists are particularly happy about that. And so I think we’re going to see a lot of overstating of what AI can do over the next few months as they try and keep the hype cycle going.

Boring is great, to be honest. A lot of the things AI is quite good at are boring things. Classification, data extraction, summarization, and other stuff that’s boring as all hell can be outsourced to an LLM in a few lines of code. It’s not exciting but it’s useful.

I do wonder if the people saying that all apps now have to have AI are correct. Maybe that’s true for going to get venture capitol, but do actual practicing lawyers care? My guess is they want stuff that works - they’re happy with the Swiss Army Knife if it’s close at hand and does the job.

This piece in Business Insider also highlights (although it’s not the point of the article) the value in boring:

As for the nontech companies talking about AI, it's hard to tell what exactly anyone means or what's hope versus reality. I recently found myself in a conversation with a bank executive who touted her firm's efforts in generative AI. When I pressed to find out what she was talking about, thinking it was something big, she told me they were figuring out how to use AI to help representatives in call centers look up information. That's probably nice for newer employees who are trying to get the hang of things. However, it isn't game-changing.

Boring is ok! Boring doesn’t make great ad copy, sure, but boring is useful. At the end of the day I think the question legal tech companies need to ask themselves is whether they’re out to raise venture capitol (and the founders become accredited investors so they can put money into hedge funds), or whether they want to do something useful.